The E-Bike Motor Assembly: Towards Advanced Robotic Manipulation for Flexible Manufacturing

Bosch Research Blog | Posted by Leonel Rozo, 2024-07-02

Remember struggling to bike uphill on a windy day? Electric bikes, or e-bikes, are changing the game for commuters and casual cyclists alike. Far from being a niche product — in Europe alone, sales soared to over five million units in 2021 — experts predict that half of all new bikes sold could soon be electric.

This surge in popularity presents exciting opportunities, but also significant hurdles for manufacturers. E-bike motor production needs to be both high-volume to meet demand and high-quality to ensure reliability for everyday use. However, this is not a one-size-fits-all market. Companies like Bosch offer a wide variety of options, and new variants are constantly emerging. Traditional manufacturing struggles to adapt to such high variance with frequent product updates, which is where robot-based flexible manufacturing systems come in. These systems offer the possibility of creating production lines that are adaptable, efficient, and capable of delivering the high quality needed for the e-bike revolution — and potentially for other products facing similar challenges.

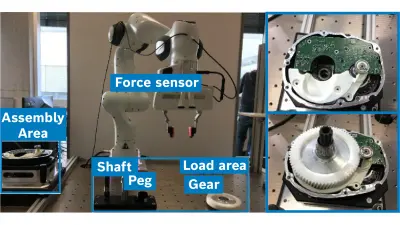

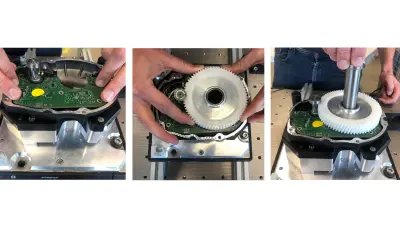

E-bike motors pose a unique challenge for robotic assembly due to their intricate components and high-precision requirements. As part of the lighthouse activity AMIRA, we investigated these challenges by focusing on assembling a subset of the parts in a practical approach that allows us to uncover the key hurdles robots must overcome in order to thrive in complex manufacturing environments. To facilitate this analysis, we designed a simplified e-bike motor assembly workstation that mimics the core functionalities of a real-world assembly line. The workstation consists of a loading platform for picking components and an assembly station where the robot manipulates them. A predefined sequence of subtasks guides the robot through the assembly process. We focus particularly on the following four subtasks that illustrate the range of challenges robots encounter:

- PCB press-fit assembly: A printed circuit board (PCB) needs to be pressed firmly into three pins on the motor base. We assume that the PCB has already been pre-positioned (potentially manually), and the robot performs the final insertion.

- Mounting a spur gear: A gear needs to be gently slid onto the rotor casing and precisely positioned. This requires the robot to place the gear, guide it to the correct location, and secure it with a gentle push.

- Inserting a shaft: The robot must insert a drive shaft through the gear's opening and into a hole in the motor casing. This involves precise placement, careful adjustment for alignment, and a final push to secure it.

- Sliding and rotating a peg: A peg needs to be positioned accurately between the shaft and the gear. Its base must be within 1 mm of the top of the shaft. Once positioned, the peg must be slid down the shaft and rotated to align with a corresponding tooth.

These tasks showcase the complexity of robot manipulation in e-bike motor assembly, involving precise positioning, gentle force application, and precise alignment. This is where our research on robot programming comes in.

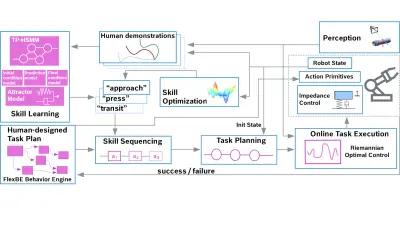

Our research proposes a novel approach that allows robots to be programmed on-site for flexible automation tasks by means of human demonstration. This approach specifically addresses the challenges identified in the e-bike motor assembly case study, whereby the robot must grasp objects using vision sensors and skillfully place them based on the assembly plan. Moving beyond unstructured machine learning, we propose a structured and compositional approach that leverages prior knowledge and task decomposition to achieve efficient and generalizable robot control. The proposed integration framework consists of five key components:

- Visual perception: The system estimates the position and orientation (pose) of objects freely placed on the loading platform.

- Learning from demonstration: A learning component analyzes the visual information and demonstration data. The demonstration data is generated by a human operator physically guiding the robot through the desired movements for each skill (e.g., grasping a specific motor component). This allows the robot to build an internal model of how to perform each skill based on the object and its pose.

- Skill sequencing: Based on a human-designed task plan, this module combines individual motion skills into a complete program for the entire assembly task.

- Adaptive task execution: During execution, the robot reproduces the planned task. However, the motion skills can adapt to the real-time state of the objects perceived by the vision system. This allows for flexibility in handling slight variations in object placement.

- Task optimization (optional): This final component can further refine the motion skills based on performance metrics, potentially improving efficiency or precision over time.

Our framework uses a hybrid perspective, strategically leveraging data-driven methods at various stages. This allows us to harness the power of machine learning while still benefiting from the robustness of established model-based control, planning, and software modules.

Developing integrated learning systems like our robotic manipulation framework demands a focus on two key aspects in unstructured and variable environments: adaptability and explainability. Adaptability considers the ability of the robot to adjust task execution to the currently observed environment. We incorporated adaptability into our framework at multiple levels: individual motion skills can adjust to variations in object pose, and the system can even refine skills that might be executed with lower accuracy, due to, for example, imprecise human demonstrations or uncertainties in the perception or control loops. Additionally, the skill sequencing module adapts the task plan to real-time information about the environment and the task state. Explainability ensures that users and experts can comprehend the robot's past and future actions, and our system uses several features to do this. First, the demonstration-based learning approach provides a clear foundation for predicting robot behavior. Second, users can visualize the planned skill trajectory for inspection before execution. Finally, the system offers real-time visualization of the current skill being performed, along with the completed and remaining parts of the task.

Loading the video requires your consent. If you agree by clicking on the Play icon, the video will load and data will be transmitted to Google as well as information will be accessed and stored by Google on your device. Google may be able to link these data or information with existing data.

We believe that this research paves the way for a future in which robots play a more prominent role in flexible and adaptable manufacturing processes. To further enhance our framework, future research could explore incorporating in-hand camera settings and tactile sensing. Such additional sensory input would enable more accurate real-time object pose tracking during grasping, which is crucial for handling variations in object placement. Furthermore, this multi-modal tracking necessitates the development of online skill adaptation techniques that allow the robot to adjust its movements based on the continuously updated sensory information. By investing in these areas, we can further empower robots to excel in dynamic manufacturing environments.

What are your thoughts on this topic?

Please feel free to share them or to contact me directly.

Author: Dr. Leonel Rozo

Leonel Rozo is currently a lead research scientist on Geometric Machine Learning for Robotics at the Bosch Research Campus Renningen, Germany. He joined Bosch after working as a team leader and postdoctoral researcher at the Italian Institute of Technology in Genoa, Italy. Leonel leverages machine learning techniques in conjunction with differential geometry and control theory to teach robots motion skills using human demonstration and reinforcement learning. His research has been applied in (dual-arm) manipulation tasks, human-robot collaboration, and human motion generation.

Further Information

Article at Science Direct website about “The e-Bike motor assembly: Towards advanced robotic manipulation for flexible manufacturing”:

https://www.sciencedirect.com/science/article/abs/pii/S0736584523001126?dgcid=author

Acknowledgments

Special thanks to the full team involved in this project: Andras G. Kupcsik, Philipp Schillinger, Meng Guo, Robert Krug, Niels van Duijkeren, Markus Spies, Patrick Kesper, Sabrina Schmedding, Hanna Ziesche, Mathias Bürger and Kai O. Arras.